Of course, Wikipedia is policed and half-written by unpaid bots.

Because no human would ever stoop so low as to write voiceless, rote information and expect to get paid for it, Wikipedia has largely relied on bots since its launch. The BBC reports that the bots have only increased in number and gotten better, becoming better aggregators than even your most humble intern.

They delete vandalism and foul language, organise and catalogue entries, and handle the reams of behind-the-scenes work that keep the encyclopaedia running smoothly and efficiently and keep its appearance neat and uniform in style.

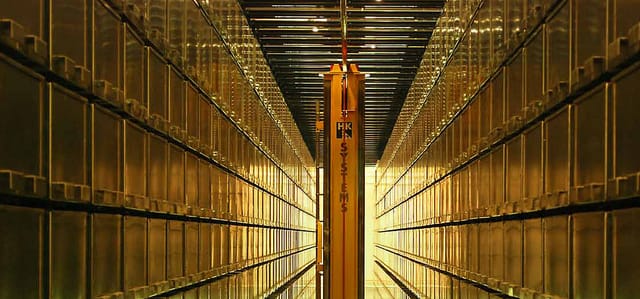

In brick-and-mortar library terms, bots are akin to the students who shelve books, move stacks from one range to another, affix bar codes to book spines and perform other grunt tasks that allow the trained librarians to concentrate on acquisitions and policy.

Naturally, the data entries about asteroids and tiny towns in America are often truncated, bullet-point listings. A idealistic intern or student can snap under pressure this banal, but can a bot break? Wikipedia seems divided about whether this content is worth generating or dangerous to the stability of the site, but it does not fear a Terminator revolution.

For one, a bot is not like an automobile – if a part fails while in operation it will shut down rather than careen into something.

Bots with the rights to delete pages, block editors and take other drastic actions could only be run by editors already entrusted with administrative privileges.

Naturally, a human has to program cataclysm—not to say it’s out of anyone’s reach. Suppose it’s healthy to always consider the potential for a maniacal vacuum of information, so just have faith.