More Imagination, Not Less

Gordon E. Moore, who co-founded Intel and is now a billionaire philanthropist, is perhaps best known for the maxim he posited in a 1965 research paper. Moore’s Law, as it became called, states that the number of transistors that can be placed affordably on an integrated circuit will double every two years; and it appears to have held sway for half a century or so. Simply put, the more transistors you can cram onto a CPU, the more computing power you have available.

Transistors may be just one of the many important components in a modern computer, but their multiplication has turned out to correlate rather nicely with overall increases in power. So rather than simply predicting a trend in an area of manufacturing few people completely understand, Moore appeared to predict the rate of advancement for all computer-based technology.

It follows that game systems have increased their processing capabilities roughly along the lines set out by Moore. With each superior generation of consoles has come not only another hand on the Twister mat of visual fidelity, but the connected ability to simulate more complex systems. Huge amounts of memory are needed to simulate a globe’s worth of soccer leagues in Football Manager, or track the status of thousands of characters and objects in Fallout—and tell more interesting stories. Theoretically, at least, Heavy Rain’s characters and interactivity create a more successful storytelling device than Monkey Island. My concern is: as these aspects grow so quickly, is something else being eroded?

——-

When we play games we are always being asked to fill in gaps with our own imaginations.

When we play games we are always being asked to fill in gaps with our own imaginations. Alongside the actual button-pushing interaction, this mental activity helps to engross the player and contributes to their unique experience. As well as tests of skill, I think these gaps are part of the challenge that games offer.

When you stare at a loading screen or hear the same line of background dialogue for the hundredth time, what are you thinking? Do you accept these as an annoyance, a limitation of the endless effort and complexity that producing a videogame involves? Or do these presentational hiccups have some use?

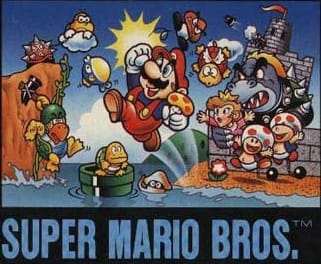

Historically, the first gap was visual. Have you looked at the box art for Super Mario Bros. recently? Compare that box art to what the game actually looked like when you played it, and not the way you remember it looking. The European edition of Mario’s first adventure is a Saturday morning cartoon group shot that defined pretty clearly the look and tone of the series would take for years. The NES may not have been able to render that image with much fidelity, but Nintendo has been making Mario look more and more like the original box art ever since.

As representational games have become increasingly detailed and have moved incrementally closer to ‘photorealistic’ over the years, the need for players to imagine the world of their character has lessened. This is especially true in the first-person perspective: you may view the world through the eyes of your avatar, but however beautiful it may look, for what effort have you been rewarded with this beauty? Stare at Bethesda’s panoramic views, Valve’s lifelike characters, or thatgamecompany’s fine sand, and the surfaces are so smooth there are no visual gaps left to fill.

If the ability to produce high-fidelity visuals has made visual decoding redundant, and if part of a game’s challenge is to flex the imagination of its player, where would the player’s mind wander next? Today, it might be found in the many interactions between player and game, and avatar and environment: what I’ll term loosely here as systems.

Skyrim’s silent protagonist has thousands of lines of dialogue, its amazing sense of place doesn’t extend to the connection between interior and exterior, and its characters frequently fail to understand its protagonist’s place in the world. Skyrim’s flawed brilliance leaves so much to the imagination whole stories can be told in its gaps. Frequent loading screens can be explained by my character as gates and doorways that hardly open at all:

I have to squeeze myself gently through the gap in the solid oak, shutting my eyes for fear of splinters. My imagination galloping, behind my eyelids appear beasts that I have fought, or weaponry I crave. Part polymath—I can keep count of hundreds of arrows—and part eidetic—I can absorb books in a moment.

Approaching the blacksmith, Adrianne, I know what I’m going to say. Like all day-to-day conversations I can’t help but speak from a script. “I’d like to buy a horse,” I ask in the stables. “What have you got for sale?” I ask in the blacksmith, and the general store, and the tavern, and the hunting shop. I’m anxious about deviating from these lines, in case I stumble over the words and make a fool of myself, in case I speak too quietly, in case my voice breaks.

The interiors of the series’ dungeons are notoriously repetitive, yet they are often arranged in ways that hint at a story for the player’s journey through them. Often this is achieved with clues as to the fates of previous explorers—their equipment, their cooling campfires, their bodies—but it is the player’s desire to help conjure the other halves of these stories that dictate how rewarding they find these sections of the game.

——-

What this shows is that most contemporary games do still offer gaps large enough for the player’s imagination to thrive. Is there, however, a point in the future when Moore’s Law will allow developers to create games so seamless that even their interactivity offers little for the mind to play with?

Hypothetically, we’ll see computers and consoles in the future that will no longer be a barrier to development themselves. It can be annoying now to see frame rate sacrificed for detail, or vice versa, but past limitations have been far more severe. Chris Kohler describes the tricks developers used to get around the 128 bytes of RAM available to them on the Atari 2600:

If you’ve ever seen little black lines appear at the left edge of the screen while you’re playing a VCS game, those are bits of the game’s code where the program is taking too much time doing other calculations, and it can’t draw on the screen, leaving it blank. The black bar on the left-hand side of the Pitfall! screen […] was Activision designers’ solution — they cut out part of the gameplay field in exchange for more processing time.

Without these barriers, with instant loading, systems modelled on reality and stories told with as much detail and subtlety as is necessary, what kind of games will we have? They may be beautiful to behold, but they would probably be more forgettable and less challenging for the player, I’d wager.

The gaps in detail left by Mario’s early, pixelated form mean that the impression of his good-natured persistence is formed from a number of sources. His movement, the sounds he emits, the colors of his clothes, and, importantly, sufficient time spent with the game itself.

Just as it would be foolish to suggest that reality can produce a truly seamless game, logic tells us that the exponential growth of Moore’s Law can’t go on forever. This decade, we are getting to the point at which even Moore’s Law must slow down, and it is predicted that by 2013, growth will have decelerated to the transistor count only doubling every three years. Increase in power doesn’t always correspond to an increase in performance, either. Software development can result in bloat; the addition of features to fill up the available processing power. Does your copy of Microsoft Word really run any better than the word processor you were using five or ten years ago? Mine doesn’t.

The struggle to make a seamless game isn’t the struggle developers should be making.

Similarly, we’re being told to expect a slowdown in the fierce progression of hardware that the games industry still seems to thrive on. This is already becoming evident in a number of ways. The console cycle is lengthening and any new generation isn’t expected to make the same lunge forward in hardware as extrapolation might suggest. Really huge games that try to do everything—creating an authentic world, running complex systems, telling interesting stories—are taking much longer to create.

This is the industry’s bloat: unless development techniques alter drastically, the only way to make something bigger is to throw more people, money, and time at it, with efficiency falling as game elements grow. The problem is that the struggle to make a seamless game isn’t the struggle developers should be making. Players need to be challenged, because without challenge games turn into Michael Bay movies, and imaginative challenges are just as important as skill challenges.

Moore, in an interview in 2005, said that, “Moore’s law is a violation of Murphy’s law. Everything gets better and better.” Whenever it looked like the limits of technology had been reached, some innovation appeared—dynamic RAM in 1967, or laser photolithography in 1982—to allow Moore’s law to continue for a little bit longer. Who’s to say that something won’t come along to lengthen its life once again? And that’s the lesson games should take from Moore: Advocate relentless progression if you must, but innovation and challenge should be at the heart of progress, not obstacles to it.