In 2013, the New Museum in New York presented an exhibition titled NYC 1993: Experimental Jet Set, Trash and No Star (named after an album by Sonic Youth). It curated art from the year of Bill Clinton’s inauguration. Soon after, New Jersey’s Montclair Art Museum featured Come As You Are: Art of the 1990s (named after a song by Nirvana). Jason Farago, writing about these shows for the BBC, posited that “this wave of 1990s shows marks a welcome effort to impose historical rigour on a period we still sometimes call ‘contemporary,’” but, “they reveal that the gap between then and now might not be as gaping as presupposed, and that, in aesthetic terms at least, the ‘90s are still going strong 15 years past their expiration date.”

That it’s challenging to identify a distinctly 90s aesthetic should be unsurprising for anybody whose formative years tracked the contours of the decade. When I was an adolescent in the 90s and beginning to think seriously about conforming to or opposing what I thought of as the mainstream, it felt as if the very concept of a mainstream meant less and less—after all, the biggest bands of the day, who acted as my moral barometer, performed what was called ‘alternative music.’ That’s because, during the 1990s, mainstream and alternative alike were subsumed into a voluminous, postmodern pastiche of information culled from across decades, made possible by the emergence of the internet in the home. Information grew like the feedback from a guitar and still resonates today, lasting precisely because it’s indistinct and impossible talk about in relation to a unified or defining ideology or principle. Imposing historical rigour upon the 90s, as those museum exhibits did, only contributes to its heteroglossic cacophony; the 1990s are the decade in which we stopped writing about history in terms of authors and decades.

transformed the notion of nostalgia

At the tail end of the 80s, a decade marked by Reagan’s nostalgia for a “shining city on a hill,” it felt as if the future was rushing towards us in the form of the 21st century. In 1990, when I was 10, Germany reunited, Nelson Mandela was freed, and the Hubble telescope was launched. Up until then, the sense had been that decades and presidents could serve as chapter headings in a compartmentalized experience of linear history. Then the internet arrived and blew those compartments up, fundamentally changing the way human beings communicate with one another.

My father worked in telecommunications, so my household was an early adopter of the internet. It was via the internet that my impressionable teen self was deluged with factual horrors to counterpoint Reagan and Bush I’s sepia-toned idealism. Two years into the decade we got the Bosnian Genocide, Rodney King riots, and Waco, Texas. Soon after came the Rwandan genocide. Francis Fukuyama’s The End of History and The Last Man (1992) codified his 1989 essay reifying American capitalism as the evolutionary end-point among right-wing political thinkers. The fall of the Soviet Union and the emergence of Bill Clinton’s New Democratic “Third Way” seemed to confirm Fukuyama’s thesis that laissez-faire capitalism was and always would be the ruling paradigm. When Clinton declared in his 1996 State of the Union address that, “The era of big government is over,” aspects of Fukuyama’s neo-Conservatism and Clinton’s neo-Liberalism became indistinguishable. Teens found themselves caught between the forces of their grandparents’ overweening nostalgia for a time long-gone, their parents’ 60s hippiedom turned into corporate pseudo-activism, and the sobering events of the world delivered through the exponential growth of internet use in the home. The effect was not that the 1990s could be tidily arranged alongside the decades that preceded it, but rather that the very notion of decades as coherent narratives with aesthetic tendencies was undermined.

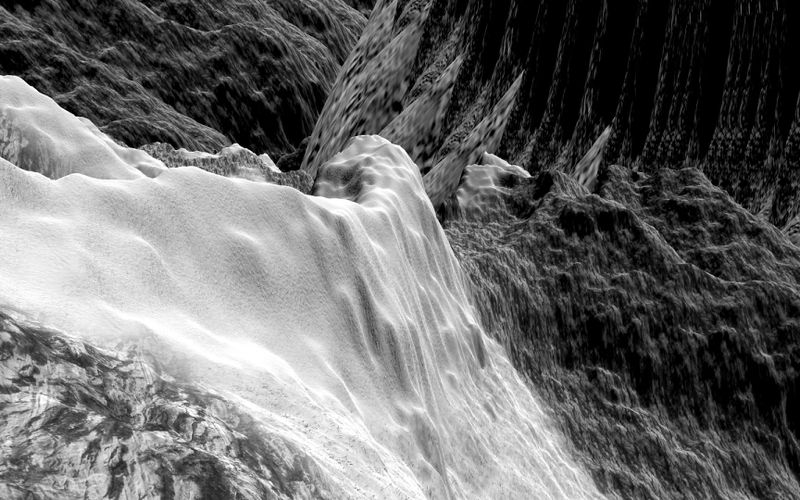

In preparing for this article I thought about how I would summarize my experience of the aesthetic of the 90s, and the best I could come up with was “extreme”—a term that, in isolation, is so relative as to be meaningless. In comic books, the publisher Image launched, with its grittier line of amped-up violence and ultra-exaggerated anatomy. ‘Extreme sports’ entered our lexicon in order to market the X Games, and American Gladiators made what was once the stuff of dystopian cinema a reality. Shock jocks like Howard Stern rose to national prominence. Apple appropriated political and subcultural heroes for its “Think Different” campaign. In music, grunge and alternative implied a form of nihilism, both stubbornly unengaged (think Kurt Cobain’s stream-of-consciousness “a mulatto / an albino / a mosquito / my libido” approach to lyric-writing) and self-hating (think Kurt Cobain’s suicide), all while cycling through an intense loud-quiet-loud dynamic that emphasized volume through contrast. The 1990s did not have an aesthetic so much as a dynamic.

Turning to the videogames of the 1990s, they too can be seen to represent a paradoxical encapsulation of and departure from the norm. While their style often embodied as a rush towards the extreme, their mechanics and design suggest a golden age of invention: a space in which nuance and creativity were allowed to exist, where the subtlety of the player’s control over the game drew the player in. The 90s produced a shocking number of what we today consider canonical titles:

Super Mario World (1990)

Civilization (1991)

Street Fighter II (1991)

Sonic the Hedgehog (1991)

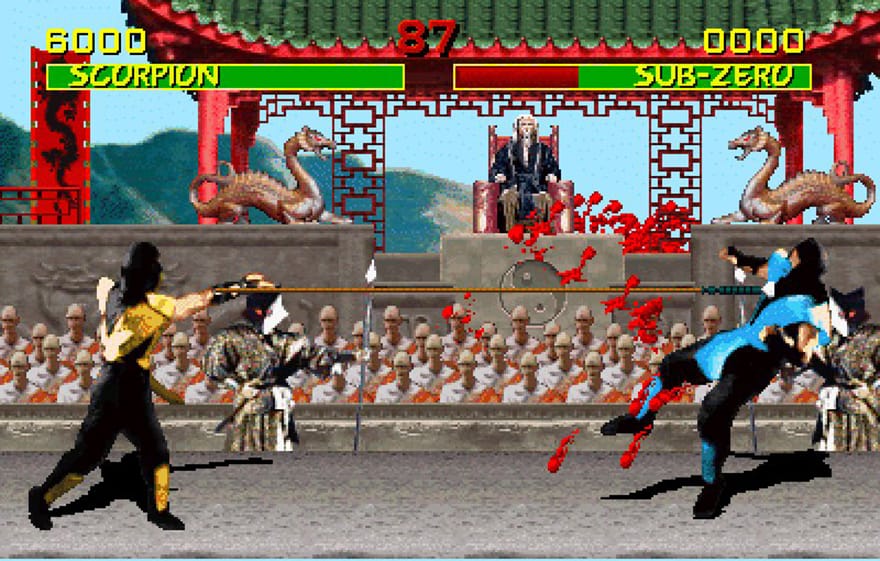

Mortal Kombat (1992)

The Legend of Zelda: A Link to the Past (1992)

Myst (1993)

Secret of Mana (1993)

Doom (1993)

NBA Jam (1993)

System Shock (1994)

Donkey Kong Country (1994)

EarthBound (1994)

Wipeout (1995)

Chrono Trigger (1995)

Super Mario 64 (1996)

Tomb Raider (1996)

Quake (1996)

Diablo (1996)

Resident Evil (1996)

Final Fantasy VII (1997)

GoldenEye 007 (1997)

The Legend of Zelda: Ocarina of Time (1998)

Half-Life (1998)

Metal Gear Solid (1998)

Tony Hawk’s Pro Skater (1999)

I mean, look at that list. Some of these games were the first of their genre, single-handedly inventing or advancing stealth mechanics (Metal Gear Solid), multiplayer shooters (GoldenEye 007), survival horror (Resident Evil), 2D fighters (Street Fighter II and Mortal Kombat) and physics-based puzzles (Half-Life). Some established a scope of story that had never before been encountered (Myst, Civilization, and Final Fantasy VII). When we talk about videogames in the 2010s, the games of the 1990s comprise the very vocabulary we use, because the way those games felt was both good and new.

it’s challenging to identify a distinctly 90s aesthetic

It helps, too, that these games still feel good when we play them today, be it Cape Mario’s swooping flight in Super Mario World or a perfectly-landed trick in Tony Hawk. While it’s possible to be nostalgic for some of the 8-bit classics of the 1980s, what usually happens upon playing them for longer than five minutes is a realization that they don’t conform to what we think of as a quality game today. They’re maddeningly hard, with steep learning curves, little to no direction or story, terrible physics, bad graphics, and are stubbornly unaccommodating. (What sadist invented the notion of three lives and zero continues?) But 1990s classics, on the other hand, are being ported to contemporary systems, finding new life on handhelds and emulator consoles.

What many of these titles had in common, and what made them children of the 90s, was their promise of more: more speed, more violence, a grander scope. Touchstones like Wipeout’s techno soundtrack, the vast number of hours required to finish Final Fantasy VII, the absurd size of Lara Croft’s bust, and Mortal Kombat’s fatalities seemed, to those of us in our middle teens, very adult. PlayStation arrived and introduced non-proprietary CD-ROM drives to the console market, not as a peripheral, but as the primary storage medium. The arms race that had begun as a battle between Nintendo’s Super Nintendo Entertainment System (SNES) and SEGA’s Genesis, which was catalogued in the 2014 book ‘Console Wars: Sega, Nintendo, and the Battle That Defined a Generation’, was utterly disrupted. The relatively immense storage capacity of CD-ROMs, combined with the fact that they were cheap to produce, allowed game makers to cram more and more into their games.

Despite this abundance, or perhaps because of it, for every classic game released in the 1990s there were about 10 knockoffs with bad commercial tie-ins and the same emphasis on being “extreme”: Duke Nukem 3D (1996); a plethora of 2D fighting games like Rise of the Robots (1994) and Shaq Fu (1994); almost everything involving The Simpsons; the inexplicable Phillips CD-i’s with licensed Nintendo properties, like Hotel Mario (1994) and the nightmare fuel Zelda series; and the ultraviolent Time Killers (1992) and Time Slaughter (1996), which suggested that the inevitable outcome of time travel will be fighters of every era hacking each other’s limbs off. The new possibilities opened up by CD media, combined with the newfound emphasis on excess, led to games whose fundamentals were not so much flawed as non-existent while their aesthetic flourishes were constant and seemingly unmoored from any kind of thematic unity. Simply put, the 1990s opened the floodgates.

Which is why it’s so difficult to talk about 90s aesthetics as something that can be defined, preserved, and subjected to the same kind of rebooting that we’ve become used to with every other decade. The 1990s did not produce a recognizable style so much as the feeling of abundance and an alienation from that feeling. Even subcultures that opposed mainstream commercialism, like grunge musicians, whose second-hand fashion implied an aversion to curated style, whose volume sought to deemphasize structure or coherence, and whose ironic mockery of authenticity or commitment to principles was meant to destabilize norms, were instantly subsumed, reduced to capital, and contributed to a monoculture of blooming, meaningless noise. In his 1993 essay “E Unibus Pluram: Television and U.S. Fiction,” David Foster Wallace wrote, “[…] today’s irony ends up saying: “How very banal to ask what I mean.” Anyone with the heretical gall to ask an ironist what he actually stands for ends up looking like a hysteric or a prig. And herein lies the oppressiveness of institutionalized irony, the too-successful rebel: the ability to interdict the question without attending to its content is tyranny. It is the new junta, using the very tool that exposed its enemy to insulate itself.”

the games of the 1990s comprise the very vocabulary we use

I return to the internet because it was a wide-angle lens on the ocean of information whose very abundance would defy anyone’s ability to impose a meaningful narrative framework. The internet fundamentally transformed the notion of nostalgia, hollowing out its utility and rendering the term almost meaningless. To be nostalgic is to retroactively impose a sincere and fond narrative on something, often with the implication that one is remembering it more fondly than it might have been in reality. In order for that to be true, it must be possible to over-value the thing. What the internet did, on the other hand, was allow a person to experience the thing again and again, at very little cost, and so it had the opposite effect of nostalgia: the internet devalues the thing by making it ubiquitous. For a teenager in the 1990s, it can’t be overstated how the abundance and repetition of experience had the effect of reducing that experience’s impact.

The culmination of the 1990’s confused rush towards the extreme was Woodstock ‘99. A person can go on and on about the original Woodstock as a singular event in the chronology of the 60s counterculture because, whether it really was as significant as they’re making it out to be or not, it only occurred once, allowing a person to thereafter be nostalgic about it. The original Woodstock serves as a fixed reference point for myriad takes on what it meant. My generation’s Woodstock, on the other hand, was a prepackaged, heavily commercialized attempt to recycle the singular cultural occurrence of a major music festival – except with a more extreme attitude. The lineup included a series of ‘nu metal’ acts, the apotheosis of grunge’s adolescent, directionless anger, who seemed to rage at the idea of the festival itself. It ended with rape and violence; I watched from a couch in my friend’s basement as Fred Durst of Limp Bizkit egged the crowd on and fires began to spring up in the background. Woodstock ‘99 was 200,000 people, at the dawn of the new millennium, carrying out a kind of protest against the very thing they were gathered to observe, not to celebrate love in the spirit of counterculturalism, but to give money to a soulless corporate contrivance for the right to break that contrivance in protest of the fact that the whole affair was utterly meaningless. If there was any kind of recognizable ideology present, it was the depressingly inter-generational ideology of men imposing their will upon women.

Photo by Frank Micelotta/ImageDirect

When it comes to the insatiable need to revisit commercial properties for another profitable round, the 1990s presents a tricky proposition. Simply put, it was a dark time. The cultural figures of the day didn’t have much to say, and those who tried were immediately appropriated into a kind of featureless, commercial heteroglossia. It’s only appropriate that David Foster Wallace’s Infinite Jest (1996), a hyper-detailed tome of a novel, would also appear in the 90s. Its story features a medium without distinct content and without end, which renders its viewers incapacitated and disinterested in anything else. The 1990s were the decade in which the concept of an American monoculture crested and disappeared into the postmodern beyond. Everything was accessible, at full volume, for money, and this crassness and crudeness made it subject to endless irony—infinite jest, indeed. I pity the poor souls who try to build a marketing campaign around that.

Header Image provided by Colin Morris on Flickr.